Adobe has announced the official availability of in-camera integration for RED V-RAPTOR, V-RAPTOR XL and Frame.io Camera-to-Cloud.

In 2011, director David Fincher produced The Social Network, the first film shot on RED ONE camera with Mysterium-X sensor, Light Iron handled digital intermediate processing and finishing. After this project, director David Fincher asked how he could automatically capture and play back timecode-accurate clips sent from in-camera without having to download them, which was a completely new concept at the time. There was a question as to whether it was okay.

Director David Fincher and RED ONE camera on location for The Social Network ©Columbia PicturesTwelve years later, pioneers like Emery Wells and John Traver, creators of Frame.io, and a team at RED with a company philosophy of running for challenges have achieved a frictionless workflow.

Michael Cioni, senior director of global innovation at Adobe, said:

Cioni: I believe this points to the future of all filmmaking workflows. It is no exaggeration to say that this integration is groundbreaking.

So how can you be sure that this integration will be as groundbreaking as it is? We have used this workflow ourselves and have been amazed at its ease of use, speed and reliability. But what really surprised us was being able to collaborate with all the key creatives in real time while working, giving us complete creative control over the project while we were on set.

Cioni: You no longer have to wait for your camera card to offload, you can get your take to the people involved right away. Editors can start cutting while shooting. Producers and other creators can also see and comment on what they see. It is possible to pass elements to a second unit that is shooting at the same time. Marketing teams can also initiate activations during production. In fact, when we produced the video announcing this new integration, on the first day of shooting, we uploaded over 2TB of data directly from the camera to the cloud, totaling over 4TB of data, either downloaded to a MAG drive or backed up to local or on-premises media. Uploaded without any hassle.

What we did that day was technically a first. But more importantly, why did we do it, and what does it mean for the industry in the years to come and beyond?

A Shared Mission

Cioni: Emery and John founded Frame.io because, as creators, they deeply understood how important precise, frictionless collaboration is to the creative process. Their mission was to create a standard cloud operating system that would centralize creators, collaborators, and content. When Frame.io hit the market in 2015, it was like no other.

When I joined in 2019, it was with the express purpose of developing Camera to Cloud, which I’ve been working on since its early days with director David Fincher and Light Iron. The synergy between Frame.io’s mission and my efforts was essential and undeniable. And since we have an in-house production team, we were able to evolve this workflow by subjecting it to the toughest test cases that our engineering team has. Our production team works with Frame.io every day, from the moment we have the creative brief to the delivery of the final assets.

Cioni: We support this technology not because we made it, but because we are the ideal customer. We know every challenge our customers face as our DNA. Browse our productions and you’ll see the highest quality work. But it’s hard to see how much impact this new workflow will have on how we create our work.

So when we shot for RED’s big reveal, we knew it was time to show the world how we were doing it.

We set new challenges for each shoot, testing specific workflows, use cases and features. For this shoot, V-RAPTOR and V-RAPTOR XL were used to capture the 8K REDCODE RAW R3D files, along with customized CDLs from the DIT, ProRes LT (ProRes proxy files), WAV files, and custom LUTs associated with each take. We insisted on sending directly to the cloud (over 4TB of 8K REDCODE RAW directly to Frame.io) without downloading a single media card.

Cioni: Actually, there were no hard drives anywhere on set. Additionally, with this new RED integration, we wanted to demonstrate that off-speed footage could be accurately recorded and played back with full proxy and OCF parity, so we added a snowy element to the creative.

Not only did it work, it worked with complete reliability. RED engineers built the first cinema camera that can automatically offload RAW and proxy files over the network directly to the cloud. Once media enters Frame.io, it is checksummed and ready for sharing. Frame.io’s servers are backed up many times, so you have a 99.9999999% chance of being safe (real numbers from our experts).

When I decided to erase the MAG while shooting, I could see the media in Frame.io and it was neatly organized in folders. Even though the Internet connection was lost, the moment the connection was restored, the photos I took were uploaded automatically and accurately.

This workflow is also possible with RED’s smallest cinema camera and most widely used RED KOMODO. Beta firmware has been released, making it possible to shoot up to 6K RAW or 4K ProRes directly from RED KOMODO to Frame.io.

Cioni: Historically , physical media workflows have had a traditional order that has to be done in a certain order. You have to download the media card to your hard drive, ship (and receive) the hard drive, process the dailies, send them to your editor, and someone has to ingest them into the NLE. In other words, the creative process has historically been bound to a series of events that cannot really be changed, and the flow of creativity has been greatly constrained by the physical world.

The reason this workflow is important is that it removes the barriers that process imposes. In other words, use the cloud to open up collaboration and give you more creative control. We’re just beginning to explore the possibilities, and over time, we’ll discover new ways of working that we haven’t even thought of yet, so it’s really exciting.

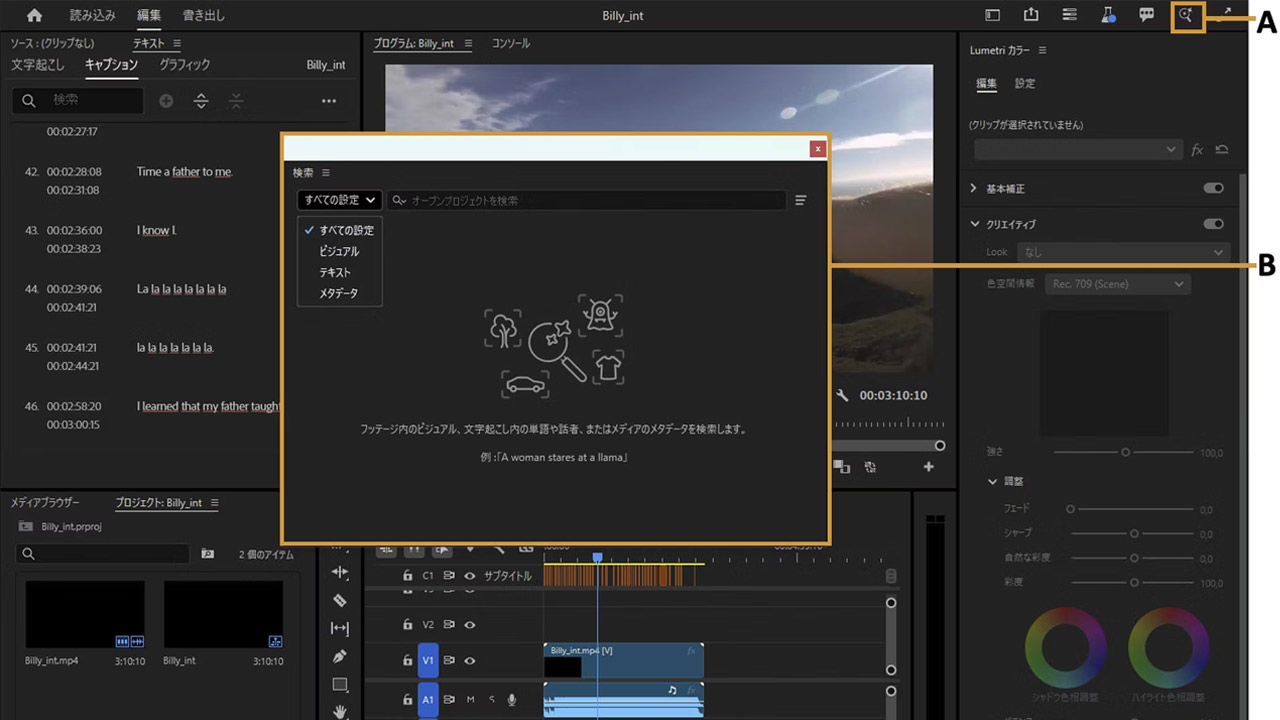

Cioni: But the process not only provides peace of mind and security, it also means that the post can start while the shoot is in progress. In our production, editors and editorial assistants were editing takes in Premiere Pro as soon as they got them. Additionally, the colorist was previewing the look of the 8K file while the director was shooting.

Cioni: The editors are present at the shooting and sometimes work on the set. However, this may not always be the case. In fact, the speed of receiving takes is the same whether the editor is on set or not. The key is to be flexible about what works best on set and to use technology for creative purposes.

Communication is direct and instant, with no barriers between creating and posting. As soon as a take is uploaded to Frame.io, any collaborator or project stakeholder you choose to grant access to can comment on it and work with it.

Cioni: Imagine an editor looking at a take and wanting to suggest a different framing that better matches the scene. Or maybe a VFX supervisor needs to compare foreground elements and plates to see if the lighting is working. Also, if the second unit director needs to reference what the main unit is shooting. In these cases, we can tell you on the fly so that you can make the necessary adjustments.

This workflow is of course already possible with hardware provided by Teradek and Atomos. However, integration with the in-camera is a meaningful harbinger of future developments.

Cloudifying original assets is the key to a faster, more streamlined, and more location-independent workflow. The ecosystem in the cloud is very powerful, not just for camera shots, but audio files, location shots, lighting setups, camera reports, script notes, and more.

Effortless Comfort

Cioni: When you look back at the big changes in workflow that have happened in the industry, from film to tape, tape to file, SD to HD, I think at first it felt strange. Even though I knew doing it would improve the way I worked, I had to get used to doing things differently.

Early adopters of new technologies are those who don’t miss opportunities. That’s one of the reasons our partnership with RED was so successful. Since its introduction in 2006, RED has continued to challenge the status quo. They are comfortable with discomfort because they know that discomfort leads to something better – whether it’s higher image quality, faster workflow, or both.

Cioni: In 2014, around the time Light Iron was acquired by Panavision, RED was in the early stages of developing the first 46mm large format 8K sensor. Panavision had just made the Panavision Primo 70 series of early large format lenses. Light Iron set up a booth at the Cine Gear Expo to capture and play back their first 8K footage on a 105-inch 8K panel. We also handed out commemorative shirts that read ‘8K Possible’ to express the significance of this moment. At the time, not everyone saw the potential of this technology. Some people were laughing. “You don’t need to shoot 8K with a large format sensor!”

Mr. Cioni: In other words , a period of discomfort brings out new possibilities in video production, and it becomes the industry standard.

And once it becomes the standard, it becomes easier. Currently, large format sensors and 8K sensors are standard. We are already exploring 12K and beyond.

The time it takes for something new or once thought to be trendy to become established as a business does not occur instantaneously. For example, HD broadcasting was talked about 20 years ago, but it took about 10 years before many people actually had HD TVs. About 20 years ago, digital projectors began to become a hot topic, and it took 10 years for DLP projectors to become popular in theaters. The move from SDR to HDR started being talked about five years ago, but it has only become commonplace in the last two years.

Mr. Cioni: So is Camera to Cloud. The first feature film used took place in July 2020. Since then, it has been used by over 6,000 productions and uploaded over 25,000 hours of content.

But that doesn’t mean everyone can use it today. Internet bandwidth is the next technical hurdle to take full advantage of the cloud.

Bandwidth Matters

Cioni: You can’t talk about this workflow without bandwidth issues. Because today, at the end of 2022, the biggest constraint on this workflow is internet availability and speed. The good news is that the continued growth of 5G, satellite internet and WiFi 6 will increase bandwidth to the extent that moving RAW files will be commonplace by 2031 compared to today. But for now, it’s important to understand what are the limitations of sending files to the cloud and what solutions are currently available.

Cioni: For this shoot, we used QNAP’s 5G BaseT adapter to convert the camera’s USB-C data to Ethernet. This hardline was connected to a network port switch on stage and had an upload speed of around 750Mbps. At this speed, especially when shooting off-speed, it falls short of real-time transfer to the cloud, but it was possible to keep up with uploading between shots.

Most people choose to upload ProRes LT in HD which requires 82Mbps for real time upload. But think about it. If you only have 40Mbps upload bandwidth, that shouldn’t be too much of an obstacle. Because it takes 6-10 hours to shoot a total of 1-2 hours of material. So, during downtime when changing setups or interrupting a shoot, you can use the free bandwidth to upload.

Cioni: It ‘s much faster than waiting for the card to be pulled out, so you can download the card first and then upload that file to the cloud. Also, consider that the RED camera itself is sending files directly to the cloud, so the files you shoot will be queued and will automatically start uploading when the camera stops recording and goes idle. please.

But even at 200Mbps, you can upload ProRes proxies and use hard disk for RAW, allowing you to start editing and get timely feedback from stakeholders. Also, since R3D RAW and ProRes files have full parity, it is possible to edit ProRes and relink to RAW.

In the last two years of using Camera to Cloud, I’ve discovered several network solutions to improve internet speeds or ensure internet connectivity in more remote locations. Providers such as Sclera Digital, Mr.Net and First Mile Technologies are experts in bringing the internet to your location with battery-powered, bonded, prioritized LTE, 5G and satellite mobile hotspots.

What Lies Ahead

Cioni: In two short years, we’ve gone from the first two hardware partners to now more than a dozen hardware and software integrations. It’s also amazing to see the first in-camera integration in the meantime that required external hardware to power the Camera to Cloud workflow.

This speed of adoption and integration is important, not just in terms of expanding workflow access and functionality. More importantly, it validates predictions widely circulated within the industry since the release of the MovieLabs 2019 whitepaper, which laid out a vision of what will technically happen in the 2020s.

Cioni: Over the next eight years, all media and entertainment workflows will move forever to cloud-first technologies that improve access, speed, usability and creative control like never before. , we are tracking. Gone are the days of recording to a camera card, downloading, and uploading to the cloud. No physical medium is needed anymore, and all creative collaborators can instantly access and work on OCF anywhere in the world.

New workflows and inventions require risk-taking foresight. The RED community has been at the forefront of technological advancements for many years. We all know that the more efficient we are, the more creative we can be.

Cioni: At Frame.io, we see evidence of that every day in our own productions, and we’re starting to see how filmmakers are embracing new workflows in their productions. If you remember director David Fincher saying he wanted to see the dailies on the iPad, seeing a movie like “Devotion” receiving HDR dailies on the iPhone or iPad is a mix of excitement, satisfaction and validation. It feels like a combination.

We want this community to grow. We welcome new pioneers and partners to bring the freedom of cloud-based workflows to more creators and accelerate their adoption.